Eliminating spurious conflicts

May 9, 2011 Leave a comment

Copyright 2009-2011 © Gabriel Zs. K. Horvath

In my previous post I introduced software transactions with semi-mutable versioned variables. I also gave an example which created spurious conflicts in concurrent transactions. As promised I will demonstrate in this post how these conflicts can be eliminated.

Semantics

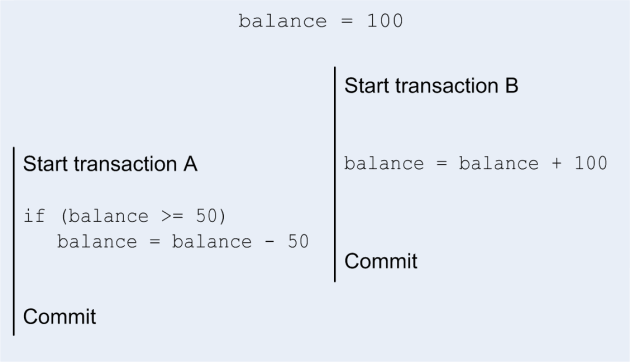

Let’s go back to the concurrent debit/credit example of our previous post:

This example describes two concurrent transactions modifying the same bank account, transaction A debits 50 if the balance is of at least 50, while transaction B credits the same account by 100. During the execution of transaction A account has a value of 100, but before committing, transaction B will have updated the value of the account to 200. Hence when transaction A commits, the value read from account (200) won’t match anymore the value read during the transaction (100) and the transaction will fail. But logically speaking, there is no reason to fail transaction A as there are enough funds available. Were the transaction to be re-started after transaction B commits, it wouldn’t fail to commit.

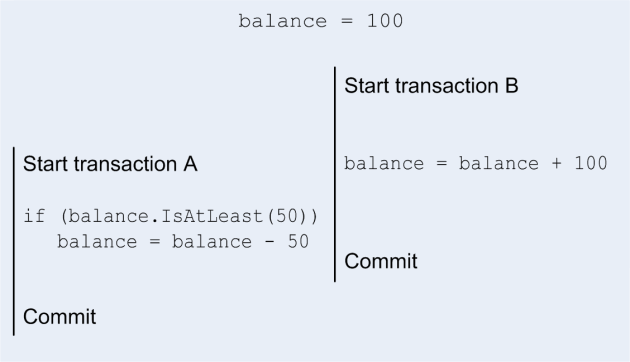

In this example we are reading the value of the balance variable to compare it to 50, and it is the result of this comparison which decides on the execution path in transaction A and not the actual value read from balance. Were we to move this comparison within the balance variable:

…the transaction would commit successfully as the value returned by IsAtLeast would in both cases be true. This encapsulation of the semantics into the variable is probably the most important way of reducing spurious conflicts. A language level implementation would not necessarily require the encapsulation of the operation within the transactional variable. But moving this semantic operation permits a number of optimizations which would otherwise be more difficult or just impossible.

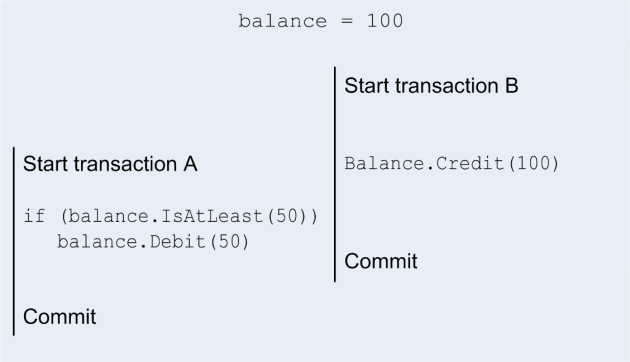

There is still a problem with our transactions, both credit and debit operations include a read of the account; this carries the risk of failing our transaction if the balance is externally modified during the execution of a transaction. But of course the operation we are performing is either to debit or credit our account. There is no reason to include a dependency on the balance in this case. The debit and credit operations do commute and hence can be performed in any order. This takes us to the other important characteristic of our software transactions: avoid unnecessary reads and expose commutative operations. So let’s encapsulate these two operations inside the balance variable:

We have now removed all unnecessary read operations on the balance of the account box, both credit and debit operations have been replaced by actual increment and decrement operations which do not involve a read operation, they are now pure write operation; this matches the actual meaning of a credit or debit operation. Transaction B is now a pure write transaction, which will always commit its changes. Transaction A is a mixed read/write transaction, and will only fail if another transaction tips the balance below 50. Now both transactions can successfully commit in parallel.

The idea of encapsulating the semantics within the variable is the key technique to remove many spurious conflicts. It works very nicely for many data types, e.g. sets, maps or counters.

Let’s have a look at the interface defined by our account object:

class Account {

public int Balance { get; }

public bool IsAtLeast(int amount);

public void Debit(int amount);

public void Credit(int amount);

}

All methods and properties perform either only read or only write operations, none of them mixes write and read operations. Avoiding combined read-write operations facilitates the creation of read-only and write-only transactions. Note that the balance can only be modified via the debit or credit methods.

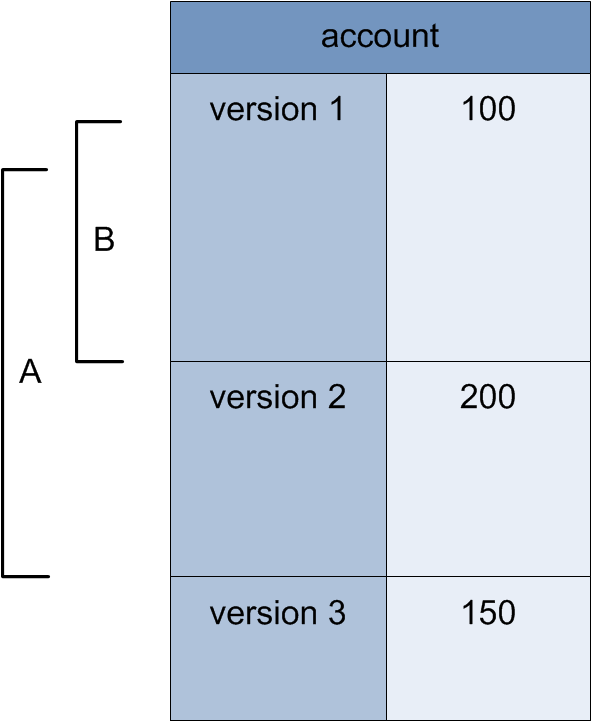

Let’s give a look at the committed versions of our account box:

So what happens during the validation of transaction A? We re-execute all the reads on the variables and IsAtLeast(50) returns the same value as when called during the execution of the atomic block, i.e. true, hence the transaction can commit. In this case committing the transaction consists of actually debiting the variable by 50. This operation is not actually performed during the execution of the atomic block, it is only recorded so that it can actually be performed at commit time. So during the commit phase the debit operation can be performed against the committed value: 200 – 50 -> 150. What happens if transaction B debits 100 instead of crediting 100? At commit time the re-execution of IsAtLeast(50) would return false instead of true, and the transaction would fail to commit, which is the expected behaviour.

Merging writes

Conceptually, both Debit and Credit operations delay their actual operation until commit time. This has essentially the effect of displacing some of the execution into the commit phase when the variables are locked, at which time the updated value of the balance is available and can be used. In fact it is sufficient to record the operation rather than merge the data; in which case the write operation represents the delta between the version at commit time and the new one. If required the merging can be performed either in the background or lazily.

Delayed operations

Other operations can be delayed to the commit phase. Consider for example the case where the value of one variable is written directly to another variable. In this case the result of the read is not used to control the execution flow of the code in the transaction and the whole operation can be delayed until commit time. Delaying operations until commit time has the disadvantage of increasing the time during which the variables are locked.

Semi-mutable transactional map

Let’s briefly look at the minimal interface for a semi-mutable maps:

public interface IMapWrite<K, V> {

V this[K key] { set; }

void Clear(K key);

}

public interface IMapRead<K, V> {

bool Lookup(K key, out V value);

bool IsKeySet(K key);

}

Contrary to some implementations the write operation does not depend on the state of the system, i.e. the indexer sets the value for the specified key, whether the key was already set or not. Same for the Clear operation, this one will just ensure that the key is not set. The Lookup query gets the value associated with a key, returns false if there isn’t any, in which case the value argument is set to its default value (default(V)), returns true and the associated value otherwise. IsKeySet just checks whether the key is set. The following C# code fragment illustrates this:

map[transaction].Clear(0);

map[transaction][0] = 10;

var b = map[transaction].IsKeySet(0)

The semantics of the map data structure simply follows the last write wins principle.

Semi-mutable transactional set

The operations for a set are defined in the following two interfaces:

interface ISetWrite<T> {

void Add(T item);

void Remove(T item);

}

interface ISetRead<T> : IEnumerable<T> {

bool Contains(T item);

IEnumerable<T> First { get; }

IEnumerable<T> Last { get; }

IEnumerable<T> Where(Func<T,bool> predicate);

}

At commit time, the operations performed inside the transaction can then simply be applied to the latest version of the set. This way the effects of multiple concurrent transactions are merged together; in case of conflict the last write wins. Note that the actual merging does not have to be performed explicitly at commit time, it is enough to record the write operation during the commit and the actual merge operation can be performed lazily.

The rich set of methods and properties on the read interface help reduce the risk of introducing spurious conflicts. For example the direct implementation of the Where operation, can remove the risk of introducing spurious conflicts by not explicitly enumerating all the elements in the set. Indeed using the Linq version would result in the explicit enumeration of all the elements of the set, any change to this set by a concurrent transaction would invalidate our transaction. By encapsulating this operation in the transactional variable, we can rerun the filter at validation time against the modified set and invalidated the transaction only if the list of items returned by this filter differs from the one returned in the atomic block. The set must be ordered to ensure that the various operations give consistent results.

Building data models from transactional variables

It is very easy to create complex data models which are versioned and transactional. Objects can use versioned variables to store their properties; objects with rich semantics can be implemented from scratch or composed using existing versioned variables. Sets and maps can be used for collections of objects or values. The level of granularity can be selected either at design time or at run-time by using reads to introduce dependencies. For example, for finely grained control, every property of an object will be a versioned variable, or the versioned variable contains whole objects for coarser object level granularity.

Partitioning

The map data structure is a good example to introduce partitioning. Partitioning is a well-known technique to reduce contention on shared resources. Consider the case where many concurrent transactions modify a map, the resultant locking of the map during the commit phase can easily become a serious bottleneck in the system. The idea of partitioning consists of splitting the map into multiple distinct sub-maps. This “spreads” the load across independent maps by mapping keys to sub-maps. The mapping will depend on the actually use case, and can be as simple as taking the modulo or truncating the key.

Partitioning is most efficient for write operations, writes performed to different sub-maps can commit concurrently. A good mapping function will minimize the risk of two concurrent transactions using the same sub-map. The same is true of the “scalar” read operations such as Lookup and IsKeySet methods which will apply to a single sub-map. Some read functions such as Count or Where will however span the whole set of sub-maps and therefore introduce a read dependency on all sub-maps, reducing somewhat the benefits gained by partitioning the data.

Partitioning doesn’t solve all contention problems, but should help in a fairly large number of cases. Partitioning can even be used for counters or the bank account class. If most of the activity on a counter consists of pure write operations, one can implement a counter as a collection of sub counters, every transaction can then pick a different counter to increment so as to reduce the chance of two transactions picking the same sub-counter. However a read operation on the counter to read the current value of the counter would result in all sub-counters being locked for read access at commit time (unless of course the read is carried out in a read-only transaction). Similarly a bank account can also be partitioned, and a BalanceIsAtLeast read operation might actually enrol only a small number of sub-account boxes (just the number required to return a positive result or all of them if returning a negative result).